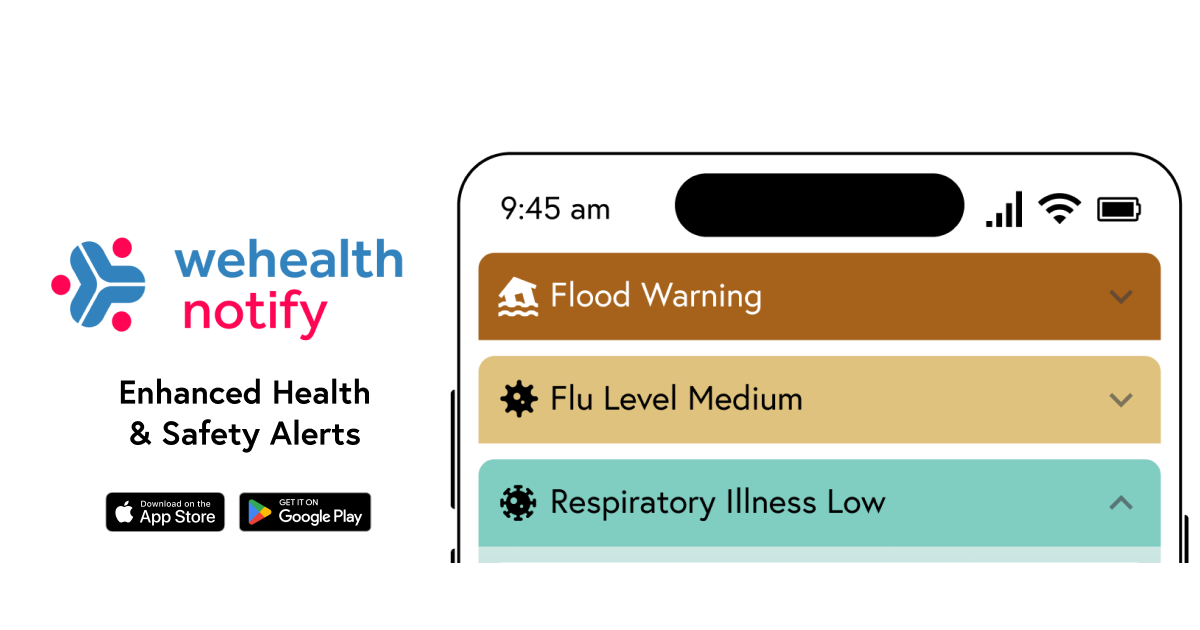

Wehealth Notify now enhances NWS alerts Across all United States

With the latest release today, Wehealth Notify now supports enhanced alerts for all 3,000+ counties & territories in the United States. Wehealth...

5 min read

Joanna Masel

:

May 12, 2021 1:24:57 PM

Joanna Masel

:

May 12, 2021 1:24:57 PM

A: For any new technology, people want to know how it helps, and to be reassured that it does no harm. The Exposure Notification system keeps data locally on users’ phones, and does not map out contact networks. First, do no harm: check. But does it do good?

For the U.K., we now have the answer: a resounding yes. A new paper out in Nature estimates that the app averted ~600,000 infections - about 20% of what the total would otherwise have been - and saved thousands of lives. They used two distinct methods to reach this conclusion. First, they measured how many people received an exposure notification and then later tested positive for COVID-19, and made an educated guess as to how much those infected-and-warned users changed their behavior, thus estimating the benefits from quarantine. Second, they calculated how many fewer cases there were in regions of the U.K. with high app usage compared to regions of the U.K. with low app usage, while doing everything they could to control for socioeconomic and other differences between those regions.

Both methods show a lot of benefit, but interestingly, the second method shows greater benefit than the first. My suspicion is that this is because notifications act as an early warning system that the disease is currently spreading in one’s social network. Most people who go into quarantine after exposure turn out not to be infected, but quarantine might in many cases be well-timed to prevent them from catching COVID-19 in the first place. In other words, exposure notification technology might be even more effective than models of its direct effects would predict.

For those of us not in the U.K., what does this tell us about other implementations of Exposure Notification? At one level, the results are not a surprise. We know that contact tracing works if done well enough, and we know that contact tracing needs to be faster (as enabled by this technology). Logically, of course this technology will work, if it is implemented competently enough (no easy task when working at pandemic speed), and adopted sufficiently (no easy task for an opt-in app whose purpose is to impose quarantine on you so that you can altruistically help others). Both of these conditions were achieved in the U.K. Adoption is a huge part of achieving success in the real world. Part of what is impressive in the U.K. is that 28% of the total population use the app (49% of those who could), and that 72% infected app users shared their diagnoses.

The details of implementation matter too. One interesting finding is the degree to which effectiveness improved when the NHS app upgraded its risk model. Overall, more than 6.1% of NHS-app-notified contacts have COVID-19, while in a September sample in Switzerland, it was only 3.2%. Both the improvement within the U.K. and the cross-country comparison confirm that when better risk assessment is used, more lives are saved, and greater reopening is possible. We should all upgrade our risk models. Luckily, the kind of data the U.K. collected should soon tell us more about predicting infection risk in the real world, as opposed to experiments that rely on mere models of infection risk, including primitive models such as 2-meter rules. Better still would be methods that give critical indoor vs outdoor information about risk, not just proximity information, although the Google/Apple API rules currently stand in the way of doing this. Again, it is not surprising that models that make better use of known infection risk factors better predict infection risk in the real world, but part of the scientific method is to directly demonstrate what might seem obvious - there can be surprises.

For me, one surprise was just how good exposure notifications were at predicting infection. The U.K. measure of 6.1% of app-notified contacts who later report a positive test, while impressively high, is actually a significant underestimate. It excludes those who weren’t eligible for testing because their infection was asymptomatic, those who did not seek testing even when symptomatic, those who received a false negative test result, and those who tested positive but did not report that test result to the app.

It’s important to recognize that proving the obvious took pretty heroic effort from the U.K. team. They measured active users, not just downloads. They navigated a complex privacy landscape to gather anonymous analytic data on all users - not just those opting in - that linked events together to study how app-recorded exposure predicts a later positive test. Do not expect that similar results will soon also get reported retrospectively for your jurisdiction. Teams that did not invest in these heroic measures to quantify effectiveness despite strict privacy protections are simply not able to make similarly reliable reports of effectiveness. That doesn’t mean their technology is ineffective, just that nobody did the work to measure total effectiveness.

Nevertheless, we can be pretty certain that if your jurisdiction’s usage was lower, or your verification code delivery was less rapid and/or failed to scale when case counts rose, or your risk model was less sophisticated, then effectiveness was lower. Most jurisdictions suffer from one or more of these problems, but the good news is that it’s not too late to improve. Exposure notification technology continues to matter after vaccination, and indeed can still be useful after the pandemic, e.g. to fight influenza. So what can we learn from the U.K. study, to implement in other jurisdictions?

Many jurisdictions have struggled with app adoption rates and/or with rapid and scalable issuance of verification codes for positive diagnoses. For adoption, the key to the U.K.’s success was to have the app do other things too, things that got people to download the app. In particular, the QR code venue check-in system was critical to achieving high app adoption. Those not using the app need to write their name and phone number at the door of a venue to assist with later contact tracing if needed. App users could simply scan a QR code and remain anonymous. In the end, that didn’t have a big impact on contact tracing via QR-code-based alerts, but it did lead to many more people downloading and activating the app. More broadly, the app was a one-stop shop for all things related to COVID-19.

To get people to report their positive diagnoses at the high rates seen in the U.K., they tightly integrated the app with testing. Germany has also taken this approach. If the app also serves as a test results delivery portal, then a diagnosis is more likely to be shared. The process of delivering verification codes needs not only to be rapid and scalable, but also to have the user experience in mind. Note that it’s not too late to get people to download the app when they go in to get a PCR test. Should they test positive, notifications will still go out to those they were in contact with from the time of download until they get the test result back, a period in which they might be quite infectious. In contrast, when users download the app only after they receive an SMS with a code related to their positive test, nobody gets notified.

One day, there will be a new pandemic. If we work now toward having a public health app in place, it won’t be such a mad scramble to make this technology effective next time. QR code check-in requirements will of course go away with the pandemic, and Google and Apple have said that exposure notification will too. But we can still have an app that is integrated with infectious disease testing and that provides accurate public health information to all. If we build that infrastructure now, we will be more ready next time, without the heroics that happened in the U.K. And maybe that will even be enough to nip an outbreak in the bud, and prevent the next pandemic altogether.

Have a question? Ask the professor: contact@wehealth.org

With the latest release today, Wehealth Notify now supports enhanced alerts for all 3,000+ counties & territories in the United States. Wehealth...

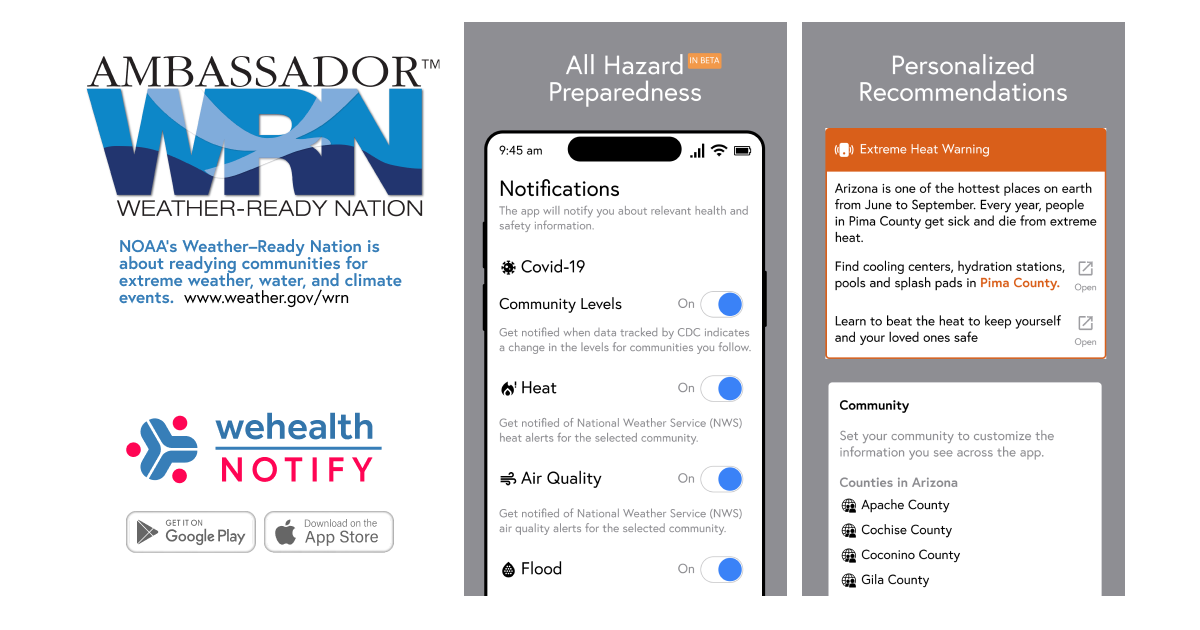

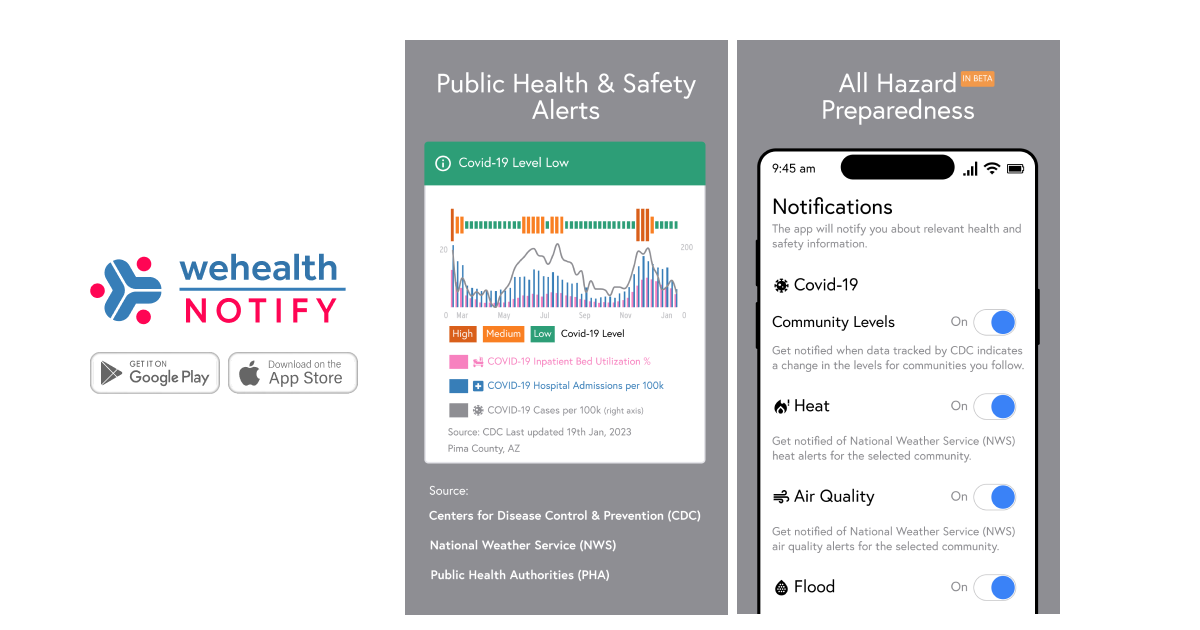

Wehealth is excited to be appointed as a NOAA Weather Ready Nation Ambassador. Weather–Ready Nation is about readying communities for extreme...

Apple and Google have decided to turn off the underlying exposure notification system currently used by Wehealth. This means that beginning on Sep...